Claude Opus 4.7 Now Available in Amazon Bedrock for Advanced Coding and Agentic Workloads

News | 22.04.2026

Introducing Claude Opus 4.7 in Amazon Bedrock

Anthropic’s most capable Opus model to date—Claude Opus 4.7—is now available in Amazon Bedrock. The model is engineered for demanding production use cases such as agentic coding, long-running AI agents, document intelligence, and advanced professional workflows.

Running on Bedrock’s next-generation inference engine, Claude Opus 4.7 combines high reasoning performance with the enterprise infrastructure, security, and scalability organizations expect from AWS.

With Softprom, official Amazon Web Services Partner, teams can rapidly adopt and operationalize this model for real production scenarios.

Why Claude Opus 4.7 matters

Claude Opus 4.7 introduces measurable improvements in areas critical to enterprise AI adoption:

- Stronger performance in agentic coding and complex code reasoning

- Better handling of ambiguous tasks with precise instruction following

- Improved results in knowledge work such as document creation and financial analysis

- Reliable execution of long-running tasks across very large context windows (up to 1M tokens)

- Enhanced vision capabilities with high-resolution image understanding for charts, UI screens, and dense documents

According to Anthropic benchmarks, the model achieves leading scores on SWE-bench Pro, SWE-bench Verified, Terminal-Bench 2.0, and Finance Agent evaluations, demonstrating real progress for engineering and analytical workloads.

Powered by Amazon Bedrock’s new inference engine

Claude Opus 4.7 in Amazon Bedrock benefits from a redesigned inference engine with advanced scheduling and scaling logic. This provides:

- High availability for steady-state enterprise workloads

- Rapid scaling for burst traffic

- Request queuing during peaks instead of failures

- Zero operator access to prompts and responses for maximum data privacy

Customer data remains invisible to both AWS and Anthropic operators, meeting strict enterprise security requirements.

Key enterprise use cases

Agentic coding and systems engineering

Claude Opus 4.7 extends the Opus line’s leadership in autonomous coding tasks, systems design, and long-horizon reasoning across complex software projects.

Professional knowledge work

The model excels at multi-step research, document generation, financial analysis, and tasks where assumptions must be made carefully and validated.

Long-running AI agents

With a 1M token context window, the model maintains coherence over extended sessions, ideal for persistent AI agents and multi-stage workflows.

Visual intelligence

High-resolution image support improves interpretation of dashboards, technical diagrams, and screen interfaces.

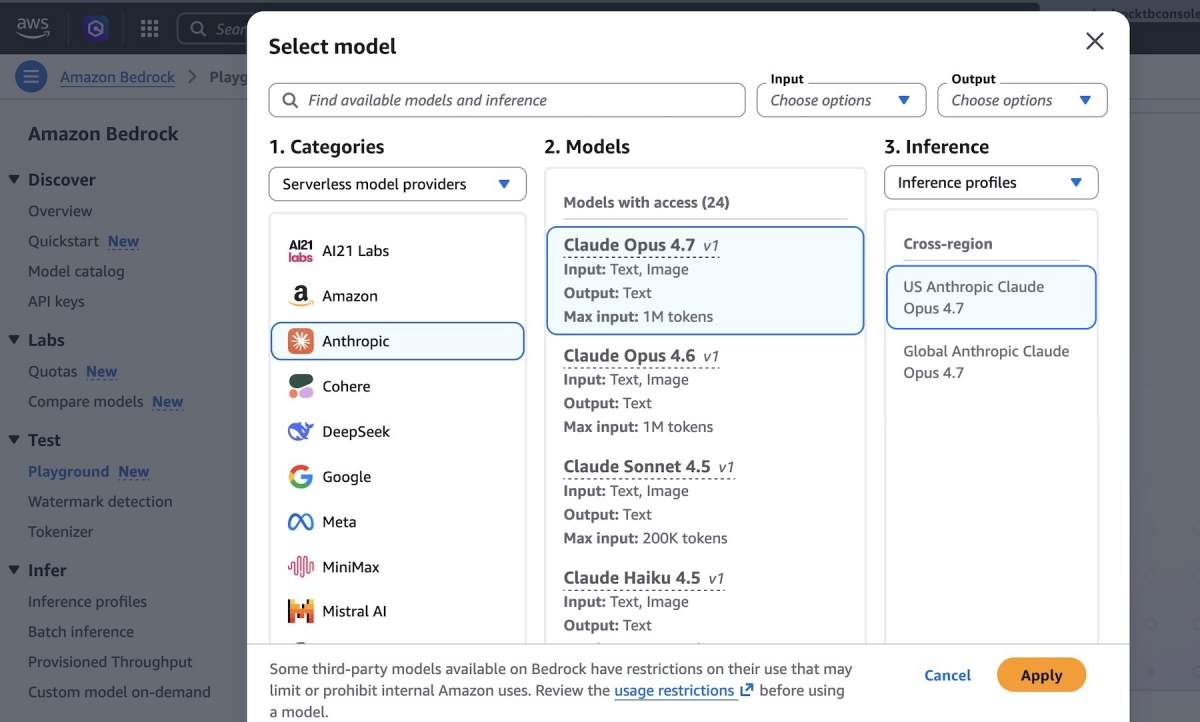

Getting started in Amazon Bedrock

You can begin testing Claude Opus 4.7 directly in the Bedrock console Playground. For programmatic access, teams can use:

- Anthropic Messages API via Bedrock runtime

- Bedrock Invoke and Converse APIs

- AWS CLI and AWS SDKs

Bedrock Quickstart also allows generating a short-term API key to begin testing in minutes.

API flexibility

Teams can choose the interface that fits their architecture:

- Converse API for multi-turn conversations with guardrails

- Invoke API for low-level, direct model access

- Anthropic-compatible Messages API for streamlined integration

Enterprise scale and capacity

Bedrock’s infrastructure supports up to 10,000 requests per minute per account per Region by default, with higher limits available. During peak demand, requests are queued rather than dropped, ensuring reliability for production workloads.

Conclusion

Claude Opus 4.7 in Amazon Bedrock represents a significant step forward for organizations building serious AI systems. With improved reasoning, coding autonomy, visual intelligence, and enterprise-grade infrastructure, teams can deploy AI agents and knowledge workflows with confidence. Start exploring Claude Opus 4.7 in Amazon Bedrock today and engage Softprom to accelerate your path to production.